We additionally discuss the creation of different embedding fashions intimately. We hope that our work serves as a stepping stone to higher embeddings for low-resource Indian languages. The model’s parameters may be trained additional for particular duties or bettering their efficiency typically. Presently evaluations have been carried out on only a few of these tasks. As for our major contribution, these word embedding fashions are being publicly launched. Also, with newer embedding strategies being released in fast successions, we hope to include them in our repository. In the future, we intention to refine these embeddings and do a extra exhaustive evaluation over numerous duties similar to POS tagging for all these languages, NER for all Indian languages, together with a word analogy job.

Mobile World Congress

Additional, we describe the preprocessing and tokenization of our data. The corpora collected is meant to be set on the whole domain instead of being domain-particular, and hence we begin by collecting general area corpora via Wikimedia dumps. All of the corpora is then cleaned, with the first step being the removing of HTML tags and links which might occur due to the presence of crawled information. We additionally add corpora from numerous crawl sources to respective particular person language corpus. Then, international language sentences (together with English) are removed from every corpus, in order that the final pre-coaching corpus comprises phrases from only its language.

General George Washington

Urdu language, which is likely one of the Indian languages we do not cover with this work. To judge the quality of embeddings, they were tested on Urdu translations of English similarity datasets. We acquire pre-coaching information for over 14 Indian languages (from a complete of twenty-two scheduled languages in India), including Assamese (as), Bengali (bn), Gujarati (gu), Hindi (hello), Kannada (kn), Konkani (ko), Malayalam (ml), Marathi (mr), Nepali (ne), Odiya (or), Punjabi (pa), Sanskrit (sa), Tamil (ta) and Telugu (te).

The general size of all of the aforementioned models was very giant to be hosted on a Git repository. We’ve got created a comprehensive set of commonplace phrase embeddings for multiple Indian languages. We host all of those embeddings in a downloadable ZIP format every on our server, which could be accessed via the hyperlink supplied above. We release a complete of 422 embedding models for 14 Indic languages. The fashions contain 4 various dimensions (50, 100, 200, and 300) each of GloVE, Skipgram, CBOW, and FastText; 1 each of ELMo for each language; a single model every of BERT and XLM of all languages.

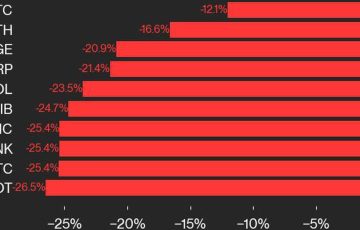

Together with foreign languages, numerals written in any language are additionally eliminated. There is a prevailing scarcity of standardised benchmarks for testing the efficacy of varied word embedding models for resource-poor languages. Determine 1: Performance of skip-gram, CBOW, and fasttext fashions on POS tagging job. As soon as these steps are completed, paragraphs in the corpus are break up into sentences using sentence finish markers similar to full stop and query mark. Plotted graph is Accuracy vs Dimension. We performed experiments across some uncommon standardised datasets that we might find. Following this, we also remove any particular characters which may have included punctuation marks (instance – hyphens, commas etc.). Created new evaluation duties as nicely to check the standard of non-contextual phrase embeddings.